Around this time of the year, offices around Australia get swept into fierce competition by the vicarious challenge that is footy tipping. While Janice from Accounting might not necessarily know her Nick Riewoldts from her Jacks, many fans take their tipping to the next level, joining online competitions1 with thousands of entrants and big cash prizes.

The Herald Sun (and to be fair, other newspapers with less easily accessible online records) also take part in the game. Their “Experts”, consisting mostly of ex-players and journalists, publish tips every week informing the public as to who they think will win each match.

Lastly, another type of pundit, one who works away from the limelight tirelessly crunching probabilities and possibilities, compiles it’s own weekly tips. The humble computer. A number of models and systems using many different inputs publish weekly tips and engage in their own tipping comp run by Monash University.

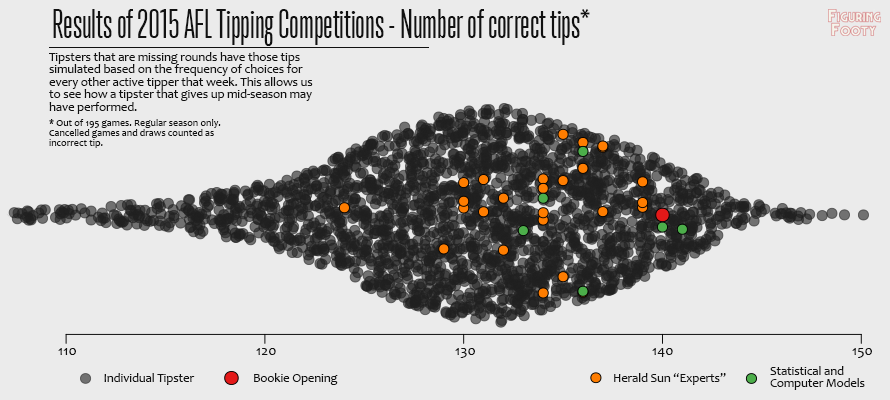

So how did these three groups fare in 2015?

Methodology

There is a big problem with looking at the results of tipping competitions, namely the survivorship bias. People that aren’t being paid by a newspaper for their tips often lose interest partway through the year and stop registering their picks. This is bound to happen more to people that had a bad start to the year than those that are winning. If we were look only at the end of year results, we would see an unnaturally stretched out field, as those that lose interest fall back. However, if we were to only look at the tipsters that registered tips for every round. We’d get an unnaturally strong group. Those that continue to register tips are more likely to be the ones that were successful early on, luck or otherwise.

In order to get a more “fair” representation of how each tipster would have actually gone, I simulate any missed rounds for an individual tipster with a draw from the discrete distribution of that week’s results for every tipster that did get their picks in.

In non-maths terms, if you missed a week, I basically just picked another tipster from that week at random and gave you their result for the week. This means you’re more likely to get an average score, but to mimic the natural variability you could sometimes get a very good or very bad score. As a whole, we get a more realistic looking distribution of tipster results.

Who are these “Experts” and what are these “Computers”?

The orange dots represent the tipping performance of the 2015 Herald Sun “Experts”. Their weekly tips can be found archived online. For point of reference, Glen McFarlane, Bruce Mathews and Mark Robinson finished the season on top with 139 correct tips. Mark Windley was bottom of the pack with 124 (after removing politician and joke tipsters).

The “Computer” or statistical tips were a bit harder to compile. Basically, I just went through any blogs I knew of and the Monash tipping comp to find anyone that took a methodological approach to compiling their tips before each round. Below is a list of every “Computer” tipster. If you know of any others that I’ve missed, do let me know.

- The Swinburne Computer run by Stephen Clarke. This system has been tipping since 1981, but there doesn’t seem to be much information publicly available on how it runs. It managed 134 correct tips last year.

- FootyForecaster makes weekly tips based on their own rating system. In 2015, they made 136 correct tips.

- MatterofStats is an AFL analysis blog run by Tony Corke. Along with other statistical investigations he also posts weekly tips and betting plans based on a number of different criteria. I’ve chosen two of his tippers to appear here. Firstly, the Win_3 tipper which was singled out as the preferred H2H tipper pre-season and is based on a conditional inference tree model. Win_3 tipped 133. Secondly, I’ve also chosen to include C_Marg tipper which is based off an Elo-style ratings model, ChiPS. C_Marg was the best of the “Expert” and “Computer” tipsters, scoring 141.

- The FootyMathsInstitue also posts weekly tips along with other analysis based on their own Elo-style model. They managed 136 correct tips in 2015.

- Darren O’Shaughnessy, one of the men behind RankingSoftware is involved in the Monash Tipping comp, and as such, we can see his tipping results. I don’t know whether he uses his software for such tips or just wings them himself, but I’ve included him as a “Computer” here anyway. He got 140 correct tips last year.

Finally, I’ve also included the implied results of the Bookie Opening. This is what you would have achieved if you just looked at the opening odds at Pinnacle and tipped the favourite (or home team if equal odds) without any other thought. As you can see, this this was a pretty successful strategy in 2015. In fact, only 1 “Computer” tipper out of all the experts and computers did better. If you had used this strategy you would have beaten over 90% of the fan tipsters and probably given yourself a pretty good chance of at least placing in any office tipping comp. But then again, where’s the fun in that?

Other Notes

If you want to compare your own tips to this graph, bear in mind that it only includes results from the regular season, and unlike most tipping comps, I have counted draws (unless correctly tipped as such) to be incorrect and not given an extra point for the cancelled Adelaide v Geelong match. This gives you a maximum of 195 matches, assuming you weren’t a savant with the draws.

Also bear in mind, this is just one year’s data and we can’t really draw too many watertight conclusions about who is a better tipster based on just this. I’d say most tipsters would have been in agreement for most games, only differing on a handful of the tough-to-predict contests. As such, a very small amount of (surprisingly luck-driven) games probably caused most of the variance seen here. That being said, there does certainly seem to be some sort of general Computers > Experts > General fans pattern going on here.

Interesting.

Would love to see how pinnacle closing odds fare. Market is more efficient and therefore would expect even better result than the openers.

I’ll look at that again sometime. If I recall correctly it was actually the same amount of correct tips. Strange this happen when the metric you are considering is as binary as win/loss tipping. I imagine closing odds would be far more efficient looking at probability errors of each bet.